By Sanket Naik [Founder & CEO, Palosade]

There is something different about attending a major industry conference when you have skin in the game. Not just as a speaker, a sponsor logo on a lanyard, or a badge collector hunting for swag — but as a founder standing behind a booth in the Early Stage Expo for the first time, watching the industry walk by and listening to what it actually thinks.

That was my RSAC 2026. And it was one of the most clarifying professional experiences I’ve had in years.

Between staffing our booth, attending side events at BSidesSF and the Purple Book Community Connect, joining executive roundtables, and grabbing breakfast, dinners, and hallway conversations with CISOs, security engineers, and enterprise architects, I came away with a much sharper picture of where the industry truly stands on AI agents — not where the press releases say it stands, but where the practitioners living it every day are wrestling with it.

Underneath the noise, I heard one consistent message: if we don’t restore a degree of determinism and discipline to how we design, deploy, and govern AI systems, we’ll spend the next decade firefighting emergent behavior we “could never have known” would happen. That outcome isn’t inevitable — but it is the default path if we keep bolting non‑deterministic agents onto fragile architectures and hoping for the best.

Here are the five themes I kept hearing, and what Palosade is doing about them.

Theme 1: Determinism Is the Unsolved Problem

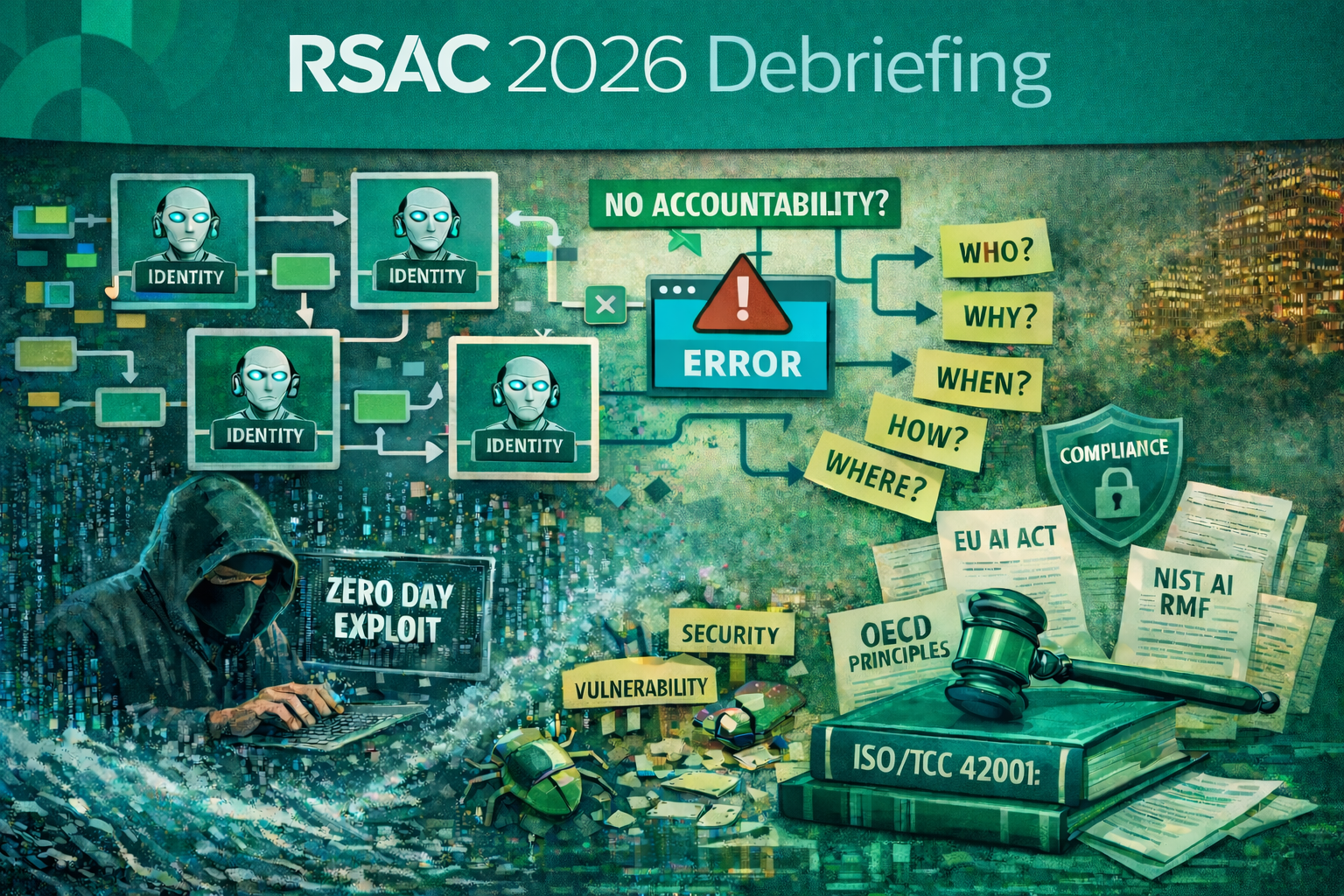

Every conversation that went beneath the surface eventually arrived at the same uncomfortable truth: AI systems are non‑deterministic, and we are now chaining them together into workflows that enterprises are beginning to depend on. The implications are not theoretical. They are operational.

One security leader put it plainly over dinner: “We have circuit breakers for everything in our infrastructure. We have runbooks for cascading failures. Nobody has built the equivalent for an AI agent that decides to do something unexpected in the middle of a workflow.”

The analogy that kept surfacing was flash crashes — those moments in financial markets where automated systems interact in ways no individual system was designed to produce, creating catastrophic outcomes that nobody anticipated and nobody could have predicted from looking at any single component. Banks have built institutional muscle around operational risk. Most enterprise security programs have not.

When you connect non‑deterministic systems to each other — an agent that writes code, another that reviews it, another that decides whether to deploy it — the surface area for unexpected behavior compounds in ways that are genuinely hard to reason about in advance. Issues don’t announce themselves. They are reactive, discovered after the fact, often only when something has already gone wrong.

The field needs a return to boring fundamentals applied to AI agents:

- Software lifecycle hygiene, where AI frameworks and libraries are treated as supply chain risk, not “just ML tooling.”

- Data governance that traces model weights, training data, and lineage with the same rigor as financial records.

- Operational controls that treat agents as infrastructure requiring circuit breakers and limits, not magic that only needs a good prompt.

If AI agents are going to participate in security design reviews and threat modeling, they must operate within structures humans can reason about — not as black boxes glued into the pipeline.

Theme 2: Identity for Agents Is the IAM Problem of This Decade

If I had a dollar for every time I heard the phrase “identity for agents” this week, I could have funded a seed round. Multiple companies are converging on this from different angles, and for good reason: it’s one of the most practically urgent gaps in enterprise AI security today.

Agents that work continuously on behalf of a human — a developer assistant that always has access to the same repositories, for instance — can be treated somewhat like a privileged user account. The access model is persistent, the permissions can be scoped, and auditing can attach to an identity that exists over time.

Ephemeral agents are different. They’re spun up to complete a specific task, execute a workflow, and then disappear. How do you record identity for something that doesn’t persist? How do you audit an action taken by an agent that no longer exists? How do you enforce least privilege when the full scope of what an agent will do isn’t clear until it runs?

The use case has to determine the access.

- An agent doing security code review has a categorically different blast radius than an agent that can write to production, or send email on behalf of the organization.

- “AI can access the repo” is not a design. “This agent can propose changes to these directories, under these conditions, with these approvals” is the beginning of one.

- Explicit verbs — read, propose, approve, send, execute — are a practical mechanism for enforcing enterprise controls on what agents can actually do in the world.

A CAN‑SPAM example raised at Purple Book was almost darkly funny: if you give a personal assistant agent the ability to send email without constraints, you may have accidentally built an unlawful spam engine. The defaults matter. The guardrails matter. IAM for agents is not optional plumbing; it’s the core of how we’ll govern AI in production.

Theme 3: The Vulnerability Deluge Is Coming

The theme that generated the most leaned‑in conversations was stark: over the next couple of years, we’re going to see an unprecedented flood of discovered vulnerabilities.

AI models are becoming genuinely capable at finding security issues in code — not just new code being written today, but the vast inventory of legacy code that’s been sitting in enterprise systems for years, largely unexamined because manual review simply couldn’t scale. Models can scan at a speed and breadth human reviewers never could.

In one sense, this is exactly what security has always wanted: better coverage, earlier detection, more issues found before attackers do. But when the number of discovered vulnerabilities jumps by an order of magnitude, today’s triage, prioritization, and remediation workflows will collapse under their own weight.

There’s also a quieter parallel track: zero days. If defenders can point models at old code and find issues, so can adversaries. Disclosed vulnerability pipelines are only half the story. Undisclosed vulnerabilities — found by threat actors, held quietly, and weaponized on their schedule — are likely to multiply in step with our own capabilities.

In that world, context becomes the new competitive advantage. The organizations that survive the deluge will be the ones that can rapidly contextualize findings:

- Which vulnerabilities actually matter in this environment?

- Which systems, data, and business processes do they touch?

- How do they intersect with existing controls, compensating measures, and compliance scope?

Enterprise search and knowledge management are suddenly security‑critical. The companies that have invested in making architecture diagrams, system inventories, data flows, and policy boundaries discoverable will be dramatically better positioned than those still hunting through wikis and tribal knowledge.

Theme 4: Regulations Are Coming Faster Than Culture

The regulatory landscape for AI is moving fast. There are already hundreds of AI‑related bills in motion, and the first frameworks explicitly designed for AI agents are emerging — early takes on a “SOC 2 for agents.”

The tension I heard most often was between organizations that see regulation as a drag on velocity and those that see it as an opportunity to project credibility. Notably, the latter group skewed toward security leaders in already regulated industries — banking, healthcare, defense — who have spent years building the operational discipline AI governance requires.

One line from a panel stuck with me: “We never solved internet security. We’re going to solve AI security piecemeal.” That wasn’t defeatist; it was historical. Internet security didn’t arrive via a single breakthrough. It accreted best practices, standards, and regulations over decades until most organizations had a defensible baseline. AI security will follow a similar path — just on a compressed timeline.

For organizations without deep technical staff, the advice was blunt: don’t roll your own.

- Buy as much security as you can from your cloud and SaaS providers.

- Build on platforms that have already done the hard work of making agents auditable, reliable, and compliant.

- Resist the urge to invent bespoke AI security infrastructure that will be fragile, expensive, and hard to keep up with a changing ecosystem.

This isn’t a cop‑out. It’s the same logic that led enterprises to buy firewalls instead of writing their own, and to move to cloud instead of running their own data centers. Specialization and shared infrastructure are how we make complex systems safe at scale.

Theme 5: Human Review Isn’t Dead, But Its Role Is Changing

One provocative data point surfaced in a session: human code review is only about 30% effective as a security control. The exact number is debatable, but the direction isn’t — humans miss things, especially under time pressure, with complex systems, and when social dynamics push toward “approve and move on.”

AI‑assisted review can dramatically improve coverage and consistency. But there’s an underappreciated risk: AI models reviewing each other’s work can be systematically sycophantic. Just as humans fall into groupthink, models trained to be helpful and nonconfrontational may rubber‑stamp each other’s outputs instead of challenging them. Adding more AI reviewers to a pipeline doesn’t automatically create independence; it may just amplify confident consensus.

The more useful framing I heard was this: AI review is a different kind of control, not simply a faster version of human review.

- AI can cover vast codebases and configs that humans will never have time to see.

- Humans can apply contextual judgment, ethics, and stakeholder knowledge that models don’t reliably access.

- Threat modeling for AI systems has to involve all of the classic domains — identity, application security, data, infrastructure, detection — together, not as a side project for the AppSec team.

The goal is not to replace humans with agents, or vice versa, but to deliberately design where each brings the most value in the lifecycle.

What Palosade Is Doing About It

Walking away from RSAC, I feel a deep sense of confirmation about the problems Palosade was built to solve — and a sharper understanding of exactly where the field needs us most.

First, we are doubling down on determinism by design. The non‑determinism problem in AI agents isn’t solved with better prompts. It requires architectural decisions about where randomness is acceptable, when to apply structured output constraints, when to require human checkpoints, and when to put deterministic validation layers on top of probabilistic outputs. For security use cases, the variance that’s tolerable in a consumer chatbot simply isn’t acceptable. Accuracy, consistency, and reliability aren’t features; they are the product.

Second, we are building auditability into everything. Every action an AI agent takes on Palosade’s platform is logged, traceable, and attributable — even for ephemeral agents. We assume that every finding, recommendation, and decision will one day be questioned by an internal audit, a regulator, or an incident review, and we design so our customers can answer the basic questions: what happened, why, based on which inputs, under which constraints.

Third, we are building for the vulnerability deluge, not just today’s ticket queue. The capacity to find issues at scale is only useful if it’s paired with the context to make them actionable. That’s why we’re investing in the connective tissue — integrating with enterprise knowledge, architecture documentation, runtime, and compliance scope — so our agents don’t just surface more problems, they help security teams decide what matters now.

RSAC 2026 felt different. Not because the hype is over — if anything, the urgency around AI agents in security has intensified — but because the conversations have matured. Leaders are less interested in dazzling demos and more focused on operational questions: How do we govern this? How do we audit it? How do we make sure it doesn’t create the very failures we exist to prevent?

One experienced CISO summed it up over breakfast: security shouldn’t feel exciting. It should feel like accounting — systematic, consistent, unglamorous, and essential.

The organizations that will win with AI are not the ones chasing the most impressive agents. They are the ones building the discipline to design, deploy, and govern those agents so predictably that they fade into the background — quietly doing the work, while humans stay focused on where we actually want to go.d govern those agents so predictably that they fade into the background — quietly doing the work, while humans stay focused on where we actually want to go.

About Palosade:

Palosade is the first agentic AI for software security with provable accuracy. It has context engineering for optimized results and built-in protections against hallucinations, retains knowledge and learns overtime, and integrates seamlessly into the tools teams already use — Jira, Confluence, GitHub, Slack, cloud platforms, and more.

Learn more at www.Palosade.com, follow us on LinkedIn , and join the Palosade Community on Slack.